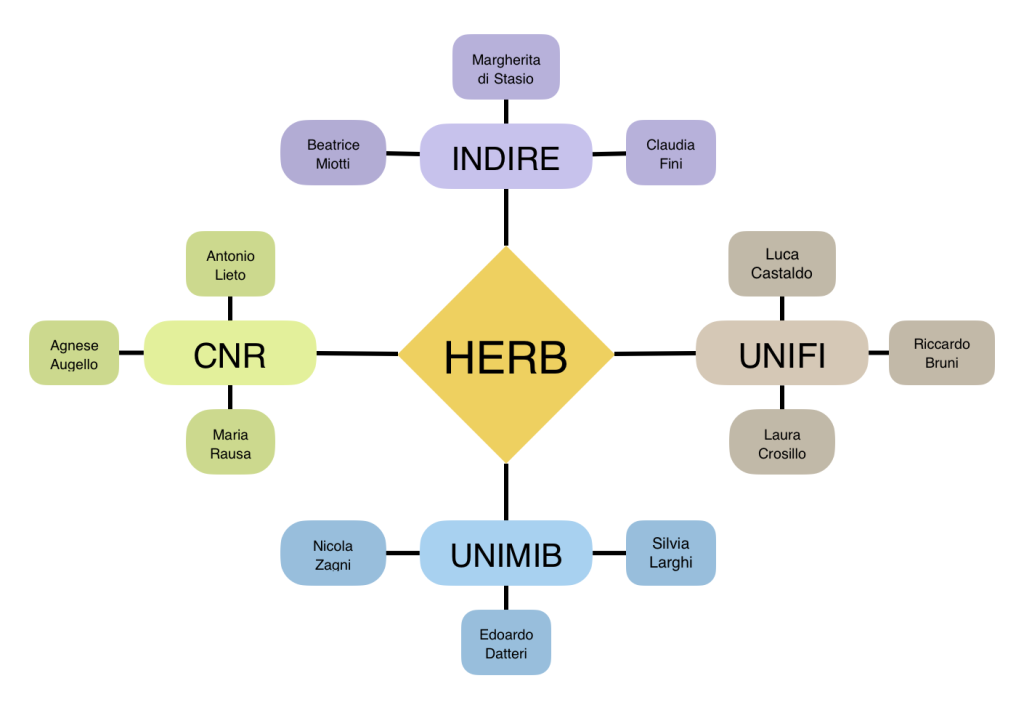

UNIMIB (RobotiCSS Lab):

Edoardo Datteri

As a philosopher of science, I primarily work on the methodological foundations of biorobotics, Artificial Intelligence, and Cognitive Science. More specifically, I reconstruct and analyze the validity of methodologies involving robots and bionic systems, as well as robots interacting with animals and humans, to study living system behavior and cognition.

My interests also concern the role of robots as tools to intervene in, and theorize the mechanisms of social cognition, still from a methodological perspective, and the methodological foundations of educational robotics.

edoardo.datteri@unimib.it

Silvia Larghi

I have a background in computer science and engineering. After an internship in robotics at the JRC – Ispra (VA), I worked in software engineering participating in EC-funded international research projects. I taught technology in school, where I coordinated the team for digital innovation. For several years I have been designing and conducting laboratories of educational robotics and artificial intelligence in schools and training courses for teachers.

My research interests concern philosophy of Robotics and Artificial Intelligence, philosophy of Cognitive Sciences and Human-Robot Interaction.

silvia.larghi1@campus.unimib.it

Nicola Zagni

Graduated in Philosophy at the University of Bologna with a thesis on the Epistemology of Machine Learning. He has a background as a robotics educator in K-12 schools. His research interests are scientific explanation, the marriage between cognitive science and artificial intelligence, robotics, and the ethics of AI.

nicola.zagni@unimib.it